Read More

Cole Tramp

His client relationships go beyond the surface as he strives to know and understand each of them and their specific goals and dreams. Cole is a deliberative person with the unique ability to identify, assess and minimize risks with regard to achieving these goals and dreams.

These abilities are the cornerstone of his extensive experience assisting clients. Cole currently has over 25 certifications all associated with cloud, security, and data. He is currently pursuing more certifications and loves the challenge. Cole graduated from Simpson College, Indianola, Iowa, with a B.A. degree in management information systems.

Recent Posts

Overview

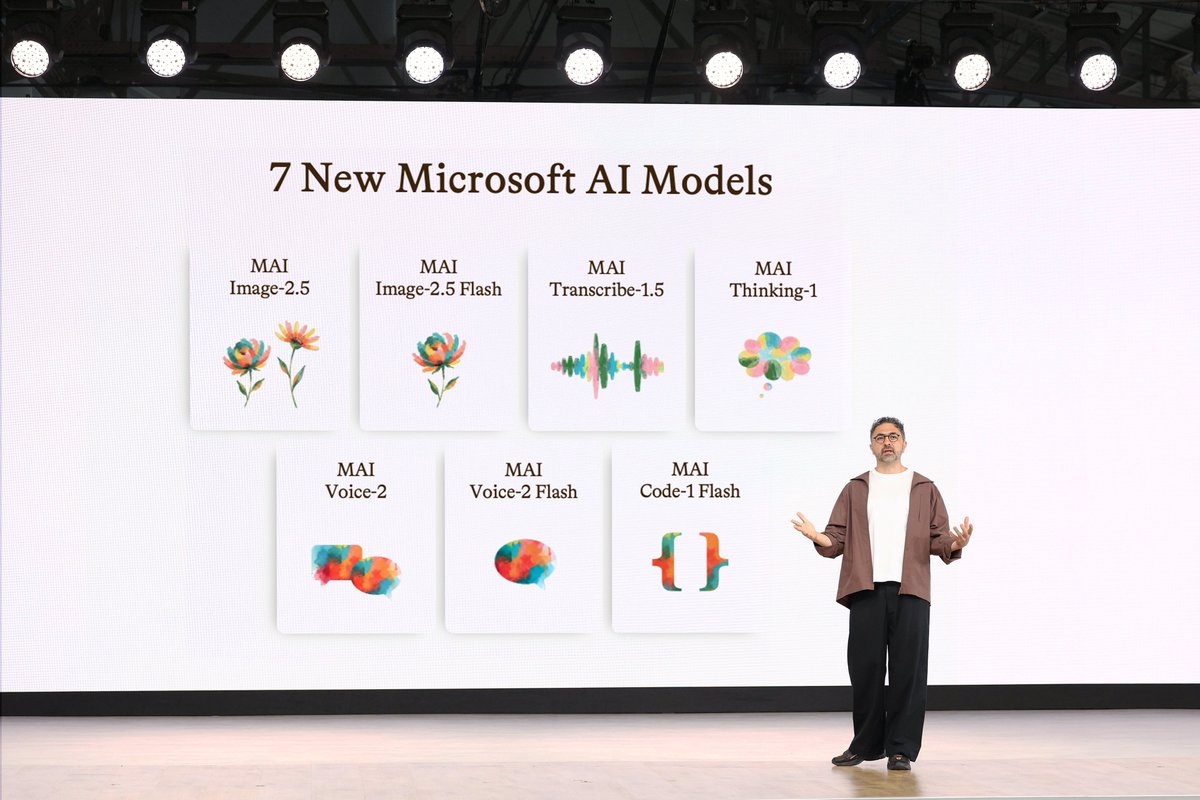

As organizations continue adopting generative AI, one of the most important decisions is understanding how to improve the quality, accuracy, and usefulness of AI outputs. Three common approaches are Retrieval-Augmented Generation, also known as RAG, prompt engineering, and fine-tuning. Each approach helps AI perform better, but they solve different problems and should be used for different business needs.

For executives and decision makers, the goal is not to choose the most technical option. The goal is to choose the approach that best aligns to the business outcome. Some organizations need AI to access current enterprise data. Others need better instructions and more consistent responses. Some need a model that is more deeply customized to a specific domain, workflow, or communication style. Understanding the difference between these approaches helps organizations invest in AI more strategically and avoid unnecessary complexity.

Retrieval-Augmented Generation

Read MoreOverview

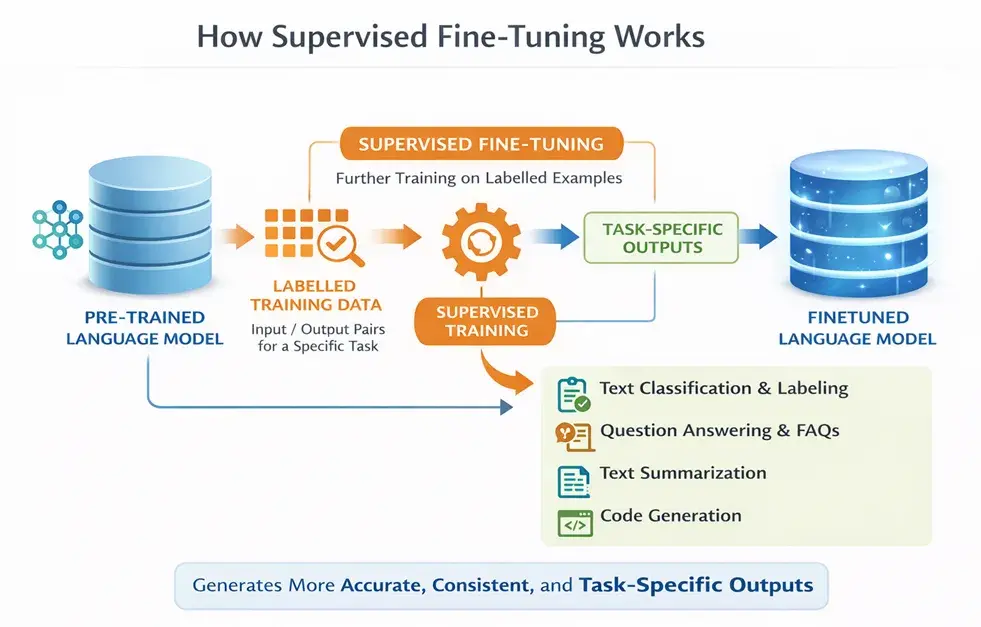

As organizations continue adopting generative AI, many leaders quickly realize that general-purpose AI models are not always optimized for their specific business needs. While large language models are powerful, they are typically trained on broad public datasets and may not fully understand an organization’s terminology, workflows, customer interactions, or industry-specific requirements.

Fine-tuning helps solve this challenge by taking a pre-trained AI model and further adapting it using smaller, targeted datasets that are specific to the business or use case. Instead of building a model entirely from scratch, organizations can refine an existing model to improve accuracy, consistency, tone, and relevance for their environment.

For executives and decision makers, fine-tuning represents a way to move AI from being a general productivity tool into a more business-aware solution. It can help organizations improve customer experiences, streamline operations, create more accurate AI assistants, and better align AI outputs with internal policies and processes.

Common use cases include:

Read More

Overview

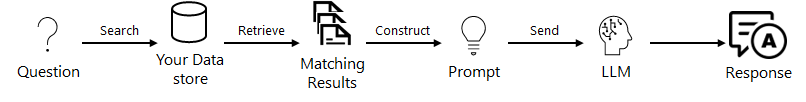

As organizations adopt generative AI, one of the biggest challenges is making sure AI responses are accurate, relevant, and grounded in trusted business information. Large language models are powerful, but they do not automatically know your company’s policies, procedures, customer data, product documentation, or most current information.

Retrieval-Augmented Generation, or RAG, helps solve this problem by connecting AI to trusted knowledge sources before it generates a response. Instead of relying only on what the model was trained on, RAG retrieves relevant information, adds it as context, and allows the model to generate a more accurate and business-specific answer.

Why RAG Matters

Read More

Overview

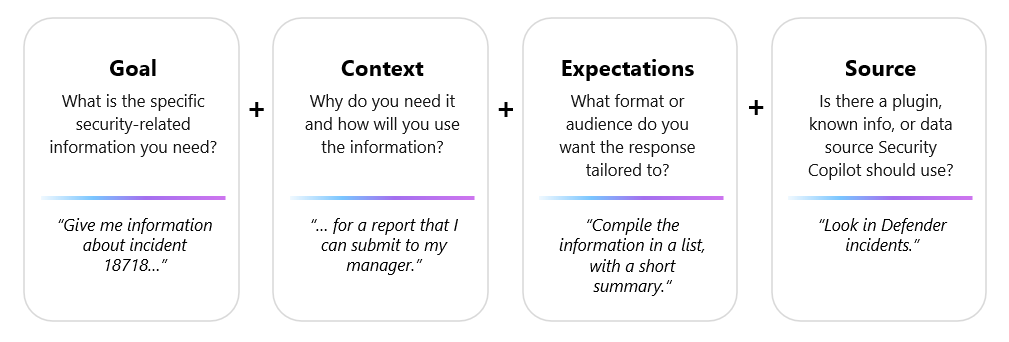

As organizations adopt generative AI, one of the most important skills is learning how to ask better questions. Prompt engineering is the practice of designing and refining prompts so AI models can better understand intent, follow instructions, and produce useful responses.

For executives, prompt engineering should not be viewed as a technical trick. It is a business capability. A well-crafted prompt can improve the quality, consistency, and relevance of AI-generated outputs, whether the use case is summarizing documents, drafting communications, analyzing data, supporting customer service, or helping employees find information faster.

The value comes from giving the model the right mix of instructions, context, examples, and desired output format. In many cases, the difference between a generic response and a useful business answer is not the AI model itself. It is how clearly the request was framed.

Popular Techniques

Read More

Overview

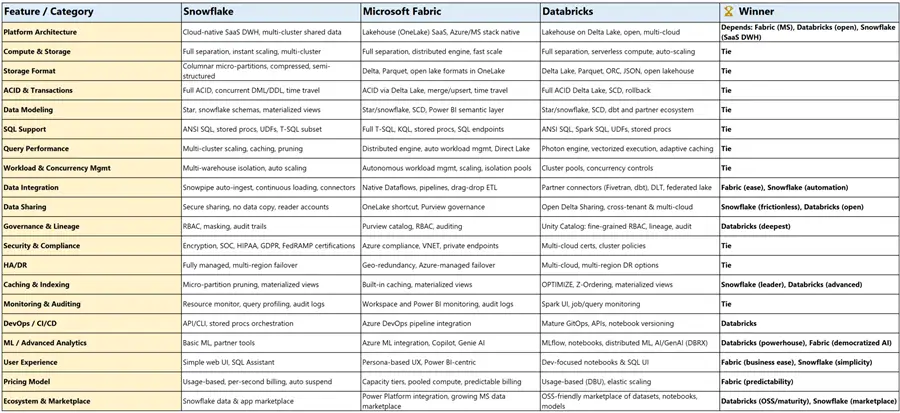

A September 24, 2025 MSSQLTips comparison of Microsoft Fabric, Databricks, and Snowflake made one thing clear: this is no longer a decision between narrowly defined tools. All three platforms now extend well beyond where they started, with overlap across data engineering, warehousing, AI, governance, and real-time workloads.

What stands out even more is timing. That comparison took place more than half a year ago, and these platforms continue to evolve rapidly. Microsoft continues to expand Fabric as an end-to-end SaaS platform, Databricks continues to deepen its lakehouse and AI capabilities, and Snowflake continues to broaden its cloud data platform story well beyond traditional warehousing.

This brings me back to one of the first things I learned in IT: it is okay to be biased about technology, as long as that bias is grounded in business reality. The best platform is not the one with the longest feature list. It is the one that best fits your people, your environment, and your ability to execute.

Quick Comparison

Read More

Overview

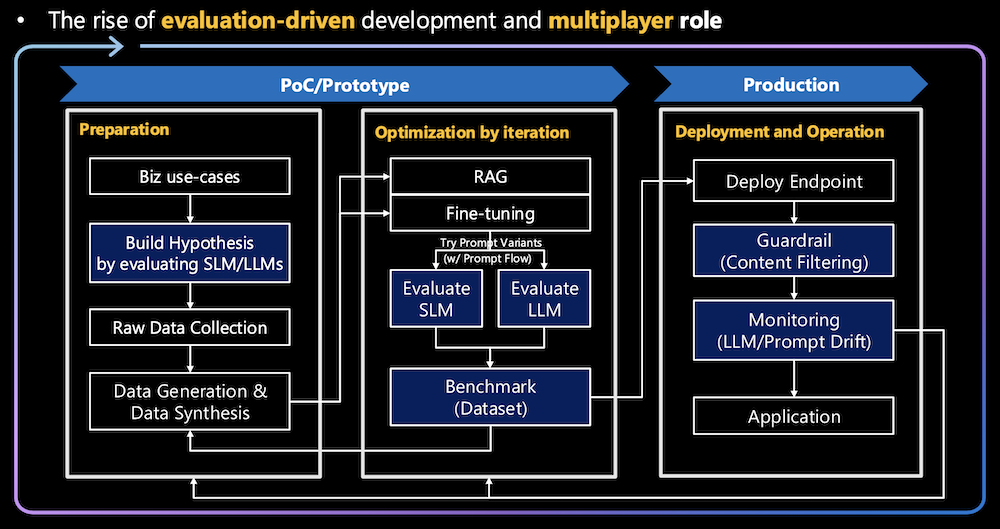

As organizations scale their AI strategies, choosing between a small language model (SLM) and a large language model (LLM) becomes as much a business decision as a technical one. SLMs are typically valued for their efficiency, lower cost, and ability to perform targeted tasks well, while LLMs are better suited for broader reasoning, deeper context handling, and more advanced generative capabilities.

Read More

Overview

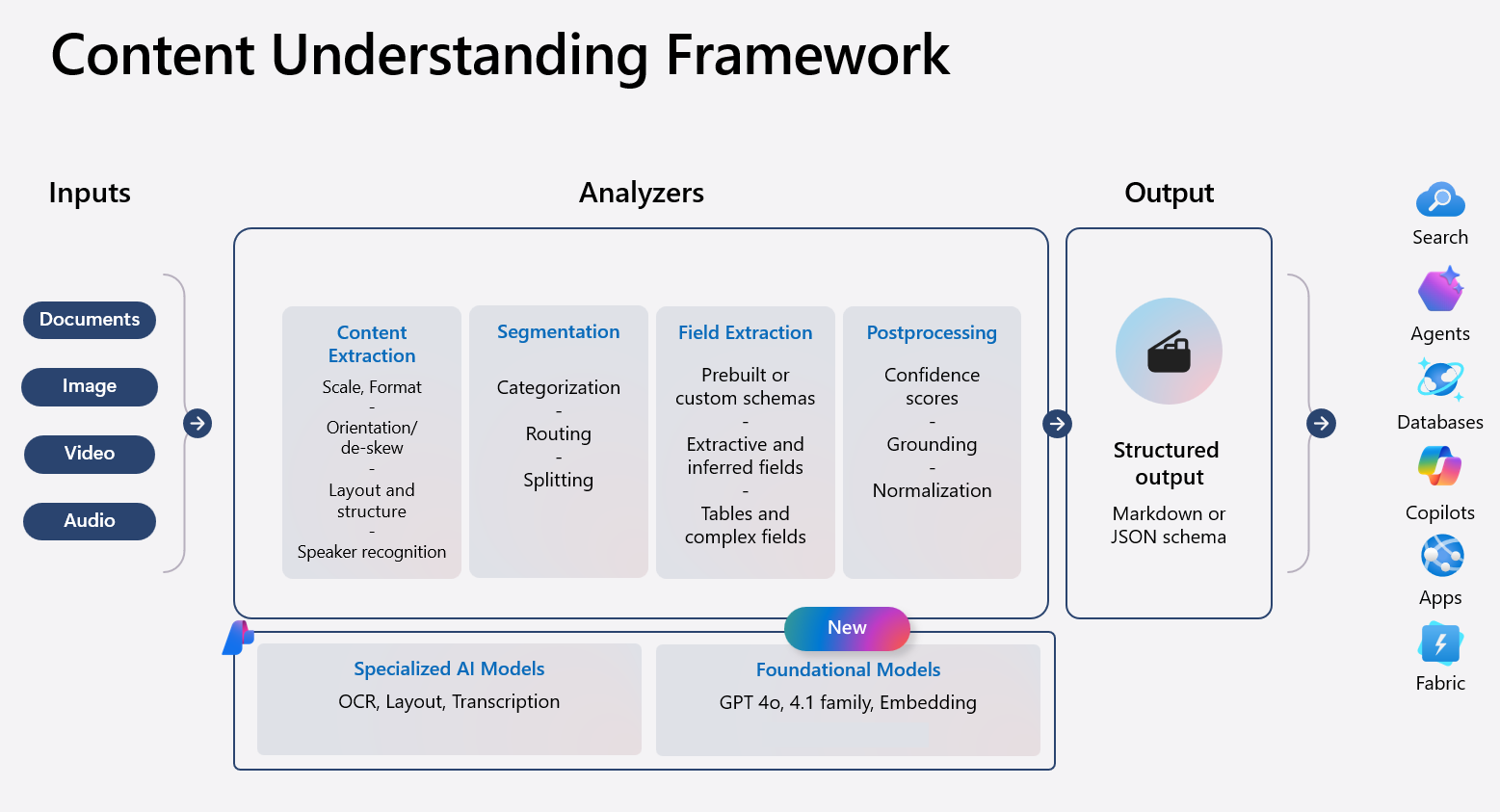

Most organizations are rich in content but poor in usable insight. Documents, PDFs, images, videos, and audio files hold critical business information, yet much of it is locked away in formats that are difficult to automate, analyze, or govern. This creates operational drag, manual review cycles, and increased costs.

Azure Content Understanding is Microsoft’s AI service designed to change that. It helps organizations consistently analyze and understand unstructured content and turn it into structured, reliable, and reusable information. Instead of fragmented tools and manual effort, Content Understanding provides a unified way to extract meaning from content with accuracy, confidence scores, and governance built in.

For technology leaders, the value is not just AI capabilities, but faster time to value, reduced operational cost, and greater confidence in automation and AI-driven decisions.

Why Use Azure Content Understanding

Read More

Overview

Microsoft Fabric continues to evolve beyond a unified analytics platform and into an agent-driven system that actively helps users understand data and operate systems. Two of the most important building blocks of this direction are Data Agents and Operations Agents. While both leverage AI, they serve very different purposes. One focuses on understanding data, and the other focuses on acting on real-time conditions. Together, they represent Microsoft’s shift toward embedding intelligence directly into analytics and operations rather than layering it on afterward.

What Data Agents Are Good At

Overview

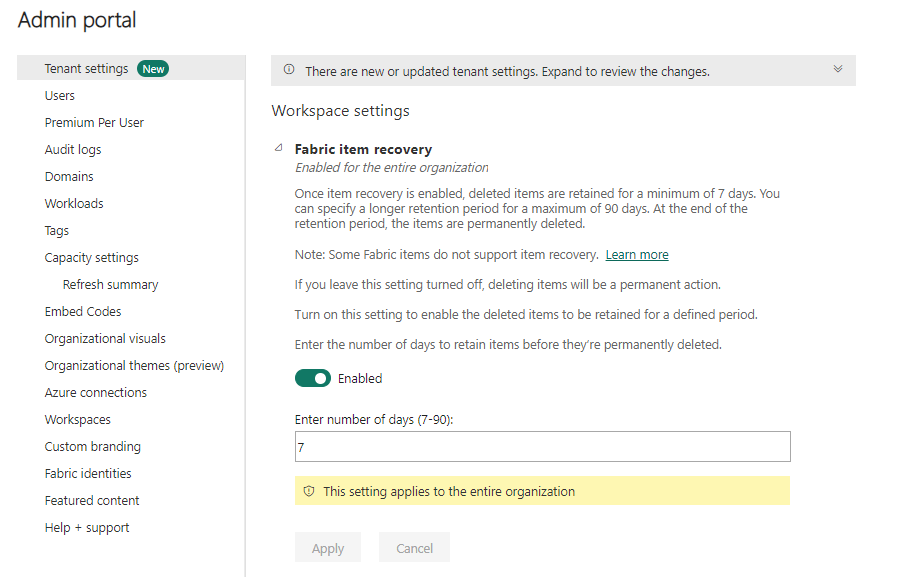

As Microsoft Fabric environments mature and become more collaborative, the risk of accidental deletion increases. A data engineer cleaning up a workspace, an analyst removing unused assets, or a contributor misunderstanding dependencies can easily delete the wrong item. Until recently, that deletion was permanent.

Microsoft Fabric now introduces item-level recovery through soft delete, providing a critical safety net for supported Fabric items. This capability complements existing workspace retention and adds fine-grained protection at the item level.

Item recovery allows deleted items to be retained for a configurable period, during which authorized users can restore them or permanently delete them. This feature is currently available in preview and must be explicitly enabled at the tenant level.

Prerequisites and Configuration

Read More

.png)