Overview

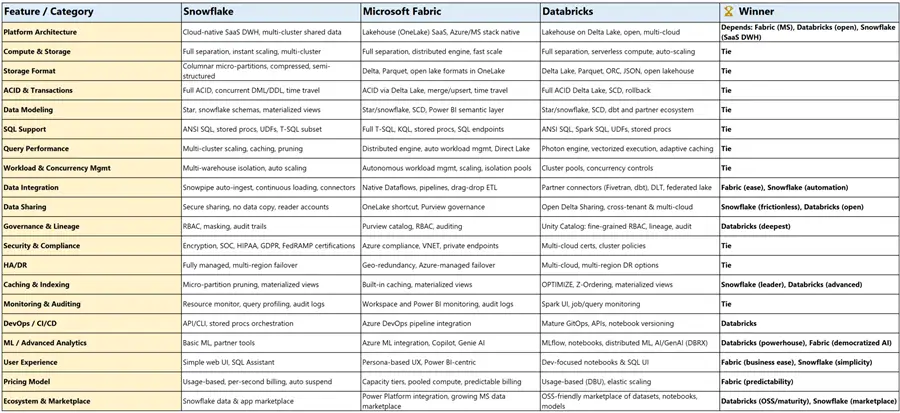

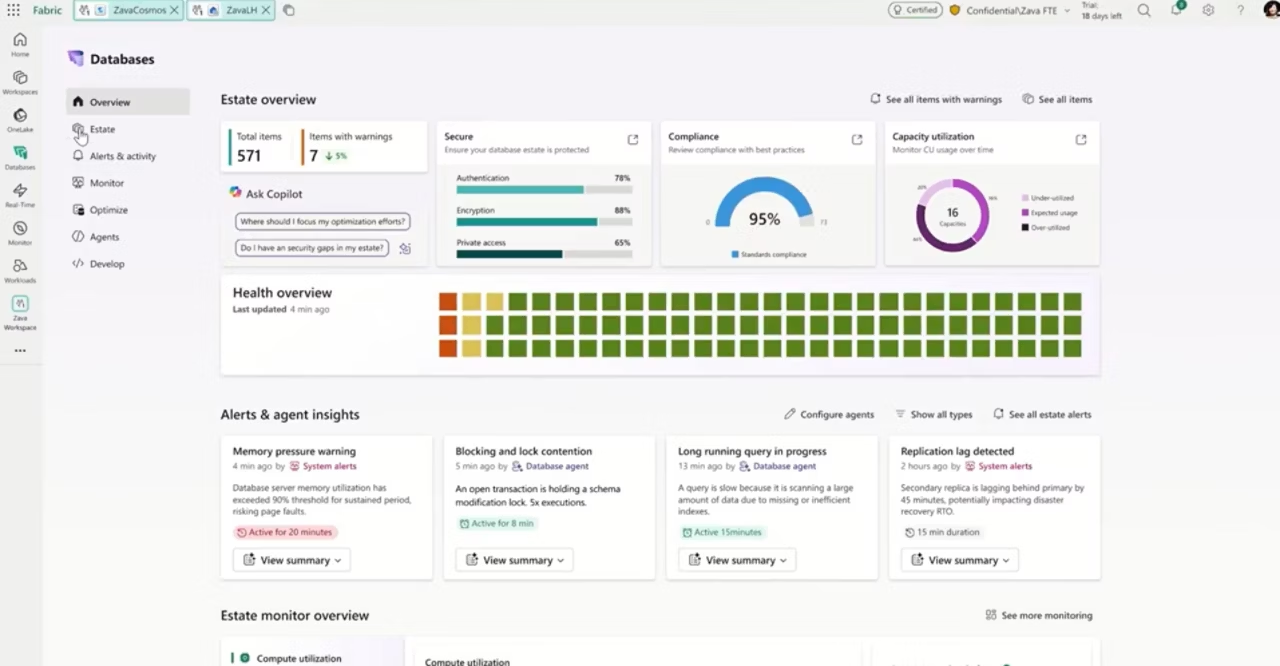

A September 24, 2025 MSSQLTips comparison of Microsoft Fabric, Databricks, and Snowflake made one thing clear: this is no longer a decision between narrowly defined tools. All three platforms now extend well beyond where they started, with overlap across data engineering, warehousing, AI, governance, and real-time workloads.

What stands out even more is timing. That comparison took place more than half a year ago, and these platforms continue to evolve rapidly. Microsoft continues to expand Fabric as an end-to-end SaaS platform, Databricks continues to deepen its lakehouse and AI capabilities, and Snowflake continues to broaden its cloud data platform story well beyond traditional warehousing.

This brings me back to one of the first things I learned in IT: it is okay to be biased about technology, as long as that bias is grounded in business reality. The best platform is not the one with the longest feature list. It is the one that best fits your people, your environment, and your ability to execute.

Quick Comparison

Read More

.jpg)