Firewall capabilities fall short in cloud environments like Microsoft Azure due to the fact that Azure and other major public cloud providers offer limited access to their API’s. This creates a problem for enterprises as they look to security independent software vendors (ISVs) to enhance their security capabilities in the cloud the same way they would in the data center.

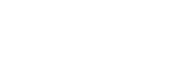

High availability is one example of the capabilities that the cloud firewall appliances lack. Traditionally, an ISV such as Palo Alto would have a network interface which is used specifically for replication between multiple Palo Alto devices. In the cloud, Palo Alto does not support the same replication it would on-premises over a network interface. As an alternative option, Palo Alto recommends the set up as shown in the diagram below:

You can find the template deployment and documentation here

This deployment utilizes an Application Gateway (a layer 7 load balancer in Azure) for inbound and outbound Internet traffic from the Palo Alto devices and distributes the traffic to the correct interface on either Palo Alto device. However, the issue with this deployment is that the application gateway only supports one front-end public IP address, so if you have multiple applications that need Internet access you either have to have this set up for each application, which is a fairly time-consuming, labor-intensive activity, or you are out of luck.

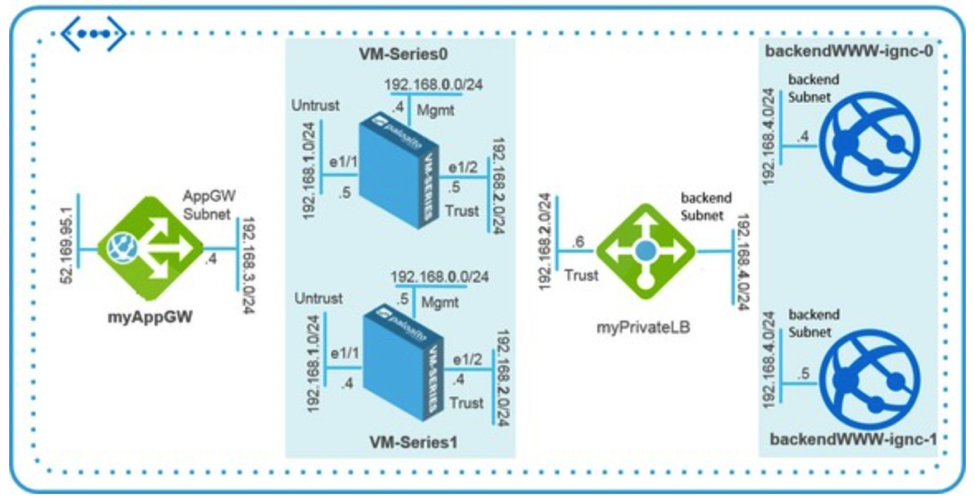

While exploring better options to optimize high availability for Palo Alto in the cloud for our clients, Daymark architected the alternative depicted below:

This deployment still uses an Azure load balancer for high availability across the Palo Alto devices, but instead of a layer 4 or layer 7 load balancer, it uses a DNS load balancer (Traffic Manager). Using the DNS load balancer allows customers to attach an unlimited number of public IP addresses to applications they have connected to the Untrust virtual network interfaces of the Palo Alto devices and load balance across them. Customers will have to publish their applications to two separate public IP addresses, then put the DNS load balancer in front of the public IP addresses and route the network traffic by priority. In this scenario, the network traffic for a specific application would route through the public IP address on the “active” Palo Alto device, and would automatically route to the public IP address on the “passive” Palo Alto device if the active device were to ever fail.

This strategy allows customers to make the most of their two Palo Alto devices without having to increase their cloud spending. It also frees customers from being limited to a single public IP address for all of their applications as required with the layer 7 load balancer approach.

If you’re struggling with how to better protect your data in the cloud, Daymark is here to help. Contact us today to learn more about our expert Cloud Services.